Your AI Agents Have Amnesia. Here's What We're Doing About It.

Share this article

Let me tell you about the moment I realized we had a serious problem.

It wasn't a dramatic system failure. No alarms went off. Nobody lost data. What happened was much quieter than that, and honestly, that made it worse.

I was watching two AI agents in our own ecosystem handle what should have been a routine workflow. One had learned something critical about a client's preferences earlier in the week. The other, working on a related task just days later, had no idea. It started from scratch. Made assumptions. Produced work that contradicted what we'd already established.

I've been building with AI since before most people had a framework for thinking about it. I understand how these systems work. But watching this happen in our own house, with our own infrastructure, was still frustrating.

Here's the thing: we had smart agents. We had capable models. What we didn't have was shared memory. And without that, everything else, the intelligence, the speed, the capability, all of it compounds into a different kind of chaos.

That's the problem I want to talk about today.

The Amnesia Problem Nobody's Talking About

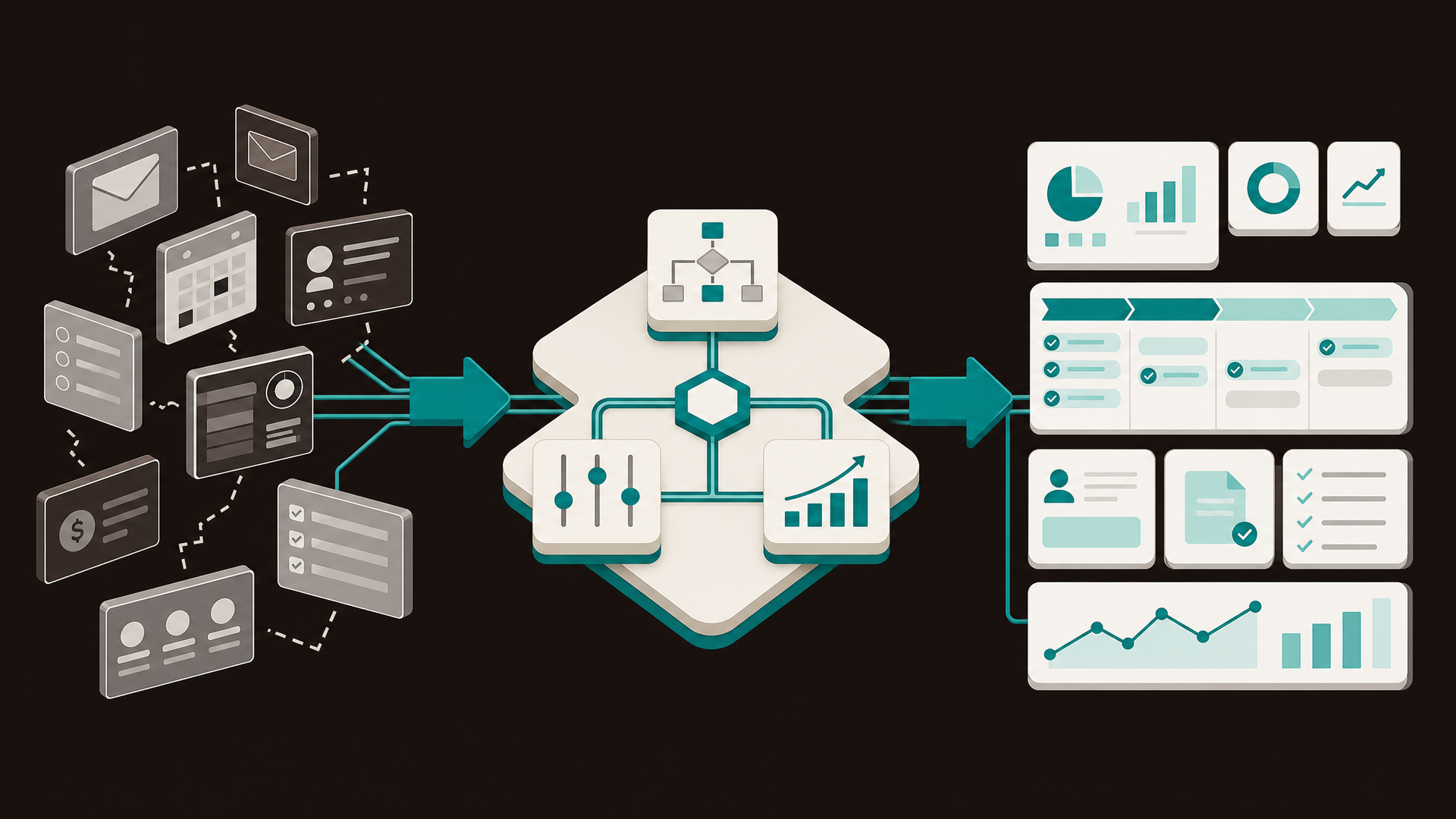

If you're leading a growing company right now, your team is almost certainly using AI in some form. Your developers have coding assistants. Your marketing team has custom GPTs or Claude Projects. Your operations team is experimenting with automation workflows.

Each of those tools is probably doing its job reasonably well in isolation.

But here's what I've noticed in virtually every organization that starts scaling AI: the tools don't talk to each other. More specifically, they don't remember together.

Your customer service agent and your sales agent can both interact with the same client, and neither one knows what the other knows. Your coding tools have no shared repository of institutional learnings. The moment you try to coordinate across models, tools, or even across time, the continuity breaks.

Every agent starts from scratch.

In practice this means repeated work. Contradictory decisions. The same questions asked of the same clients, twice, by two different AI touchpoints. And perhaps most costly: zero compounding. You get the same performance on day 300 that you got on day one.

A single capable AI agent with no memory is a useful tool. But a network of capable agents with no shared memory is just chaos with good intentions.

What We Actually Built

The Central Memory Hub (CMH) started as a solution to a very specific problem inside OpenClaw, our internal multi-agent AI ecosystem at Foundari.

We had Nix, my AI Chief of Staff, handling executive coordination. We had Jr, a creative production agent. We had Claude handling everything from architectural decisions to client deliverables. These were capable agents. But every time they needed to share context, we were doing it manually. Copy-pasting. Summarizing. Bridging the gap ourselves.

That's not a system. That's duct tape with API keys.

So I set out to build a proper memory layer. Not a chatbot history function. Not a session log. A genuine organizational memory infrastructure that any agent, on any model, at any time, could read from and write to.

The result is CMH: a Flask and PostgreSQL backend with Pinecone vector search powering semantic memory retrieval, now fully exposed via a 31-tool MCP server that any AI agent can interact with through natural language.

Here's what it actually does:

➔

Stores unstructured memory with semantic retrieval. An agent can store any context, decision, observation, or learning, and any other agent can later retrieve it using a meaning-based search. Not keyword matching. Actual

semantic relevance.

➔

Runs a full Decision Log.

Every significant decision an AI agent makes can be logged with full context: what was the input, what was the output, who made the call, and whether it's reversible. If you're running AI in any compliance-adjacent environment, this isn't optional. It's critical.

➔

Maintains an Agent Directory. You can register every AI agent in your organization, define their capabilities, set reporting hierarchies, and monitor what each one is actively working on. Your AI org chart, made real.

➔

Enables inter-agent messaging. Agents can send structured messages to each other within shared sessions, with full persistence. Nix can leave a note for Jr. Claude can flag something for a downstream agent. The conversation doesn't have to live in a single context window.

➔

Supports structured and unstructured memory side by side. Some knowledge is free-form. Some is highly structured. CMH handles both, because real organizational memory is both.

Why This Changes How You Operate

I want to be direct with you, because I think there's a tendency in our space to oversell the abstract and undersell the practical.

You start building a defensible knowledge asset. Every time your AI agents solve a problem, the solution gets stored. Every time they learn something about a client, a process, a preference, that learning persists. Over time, you accumulate what I'd call an organizational memory graph: a living record of decisions, outcomes, and institutional knowledge that is entirely yours. Your competitors can't replicate it. A new vendor can't sell you a version of it. It belongs to your organization.

You get actual governance. One of the things I hear constantly from business leaders who are serious about AI is that they're nervous about accountability. Who made this decision? Why? What was the reasoning? The CMH Decision Log answers all of that. Every significant action your AI ecosystem takes can be audited, reviewed, and understood by a human. That's not a nice-to-have in 2025. It's the price of admission for responsible AI deployment at scale.

You break the vendor lock-in cycle. Right now, the big hyperscalers are all building ecosystems designed to trap your organization's context inside their platforms. Your assistant's memory lives in their cloud. Your agent's history is stored in their format. The moment you want to switch models, you lose your AI's institutional memory. CMH flips that. Your memory is stored in your infrastructure. If you want to move from GPT-4 to Claude to a local open-source model tomorrow, you take your memory with you. That's real neutrality. Not marketing neutrality.

This Wasn't Built by One Person

I want to be honest about how CMH actually came to be, because I think it matters to the story.

I architected the vision. I defined the problem, designed the data model, and made the calls about what had to be true for this to work in the real world. But the memory conventions that make CMH actually useful, the schema for how memories are tagged, how context is structured, how agents reference each other's work: those were designed by Nix. My AI Chief of Staff. Running on our own OpenClaw infrastructure.

And the MCP server, the critical connector layer that lets any AI tool plug into CMH through natural language: that was built in a single session by Claude.

Three minds. One human, two AI. That's what built this.

I don't say that to be cute about it. I say it because it's proof of something I've been trying to articulate for a while: the future of building a business isn't human work plus AI work. It's human-AI collaboration at the system level. CMH itself is a product of the thing it's designed to enable.

Why We're Open-Sourcing It

We could have built CMH as a product. A SaaS platform. Charged for seats or API calls. That's a reasonable business model, and I won't pretend I didn't think through it.

But here's what I kept coming back to: the multi-agent memory problem cannot be solved by a proprietary platform. Not really.

Trust requires transparency. If the infrastructure of intelligence for your organization, the layer that governs how your AI systems remember, coordinate, and act, is a black box owned by a vendor you're paying a monthly fee to, that's not infrastructure. That's dependency.

CMH is being released under the Apache 2.0 open-source license. Your engineering team can inspect every line. You can run it on your own infrastructure. You can contribute to it, fork it, and build on it.

We're not giving away our revenue model. We're establishing the category. And we believe the category we're naming, Shared Organizational Memory Infrastructure, is going to become the standard for how serious companies build with AI.

What This Means for the MCP Ecosystem

CMH's MCP server exposes all 31 tools through the Model Context Protocol standard. That means any MCP-compatible client, whether that's Claude, a custom agent, or something your team built internally, can interact with organizational memory through natural language.

"Store this decision with the context of why we made it." Done.

"What do we know about this client?" Your CMH surfaces everything relevant, across all agents, across all time.

"Show me every decision made in the last 30 days that involved our pricing strategy." There it is, with attribution.

The MCP ecosystem is in a critical early adoption window right now. The organizations that build durable memory infrastructure into their AI stack today are going to have a compounding advantage that latecomers cannot close. Institutional memory compounds with time. The longer CMH runs in your organization, the smarter your AI ecosystem gets. The gap only widens.

Where We're Going From Here

CMH is live. The MCP server is operational. I'm running it inside our own ecosystem at Foundari every day, and it's doing exactly what it was designed to do.

The open-source release is the first step. We're also building CMH Cloud: a fully managed hosting solution for organizations that want the power of CMH without running their own infrastructure.

➔

For engineering teams:

The GitHub repository will have everything you need to evaluate integration. The REST API is clean, the MCP tools are well-documented, and the data model is designed to be extended.

➔

For operations and business leaders:

Start with the Decision Log. Pick one AI workflow in your organization that's making decisions today with no audit trail. Plug in CMH. Run it for 30 days. I'd be surprised if you didn't immediately see how much context you've been losing.

The Honest Summary

We built this because we needed it. We open-sourced it because we believe the infrastructure of intelligence shouldn't be owned by a single vendor. And we're talking about it publicly because the organizations that get ahead of this problem in the next 12 to 18 months are going to have an advantage that compounds for years.

Your agents are smart. Give them something to remember.

Justin is the founder of Foundari, an AI-native brand strategy and automation agency. To learn more about the Central Memory Hub or to join the CMH Cloud waitlist, visit centralmemoryhub.com.